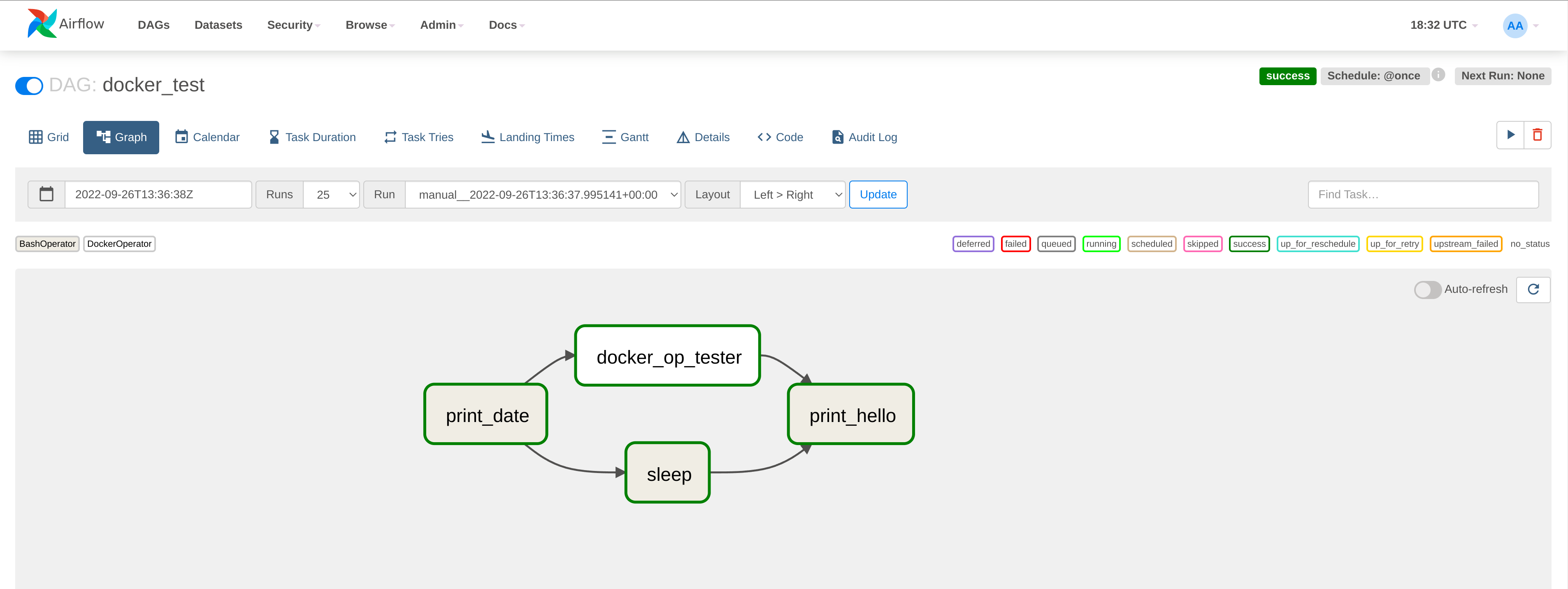

No the DAG still fails with the following log error: I added - /var/run/docker.sock:/var/run/docker.sock to the volumes section in my airflow docker-compose file. My DAG script looks like this: from airflow import DAGįrom .docker import DockerOperatorĭescription='Testing the docker operator',Ĭommand='echo "this is a test message shown from within the container',Īfter digging around a bit, I assumed that this is a Docker-in-Docker issue, the most likely solution was found in this tutorial. So far so good, however if I trigger the DAG with my docker-operator it fails and I get a severalįileNotFoundError: No such file or directory errors in the logs. Running this from the CLI with docker run -name docker-test-container docker-test-image will give me the expected output: Hello World

I can create my image with docker build -t docker-test-image. Which together with the following simple docker-file FROM python:3.9 To test this I have created a simple "Hello World!" example-script: import numpy as np This works fine, however I would like to move the python scripts, which are my tasks out of the mounted plugins folder and into their own docker containers. I have a docker container running on my windows machine, which was build with an adapted version of the docker-compose file provided in the official docs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed